Using Models

Welcome back to Stable Diffusion basics. In this lesson, we will create a realistic portrait of a woman having a drink, sitting next to a river, as shown above.

PREREQUISITES

You should have created a fat cat and are ready to start making realistic people, and have activated and logged in to your private creation area. Let’s begin.

Lesson Goals

- Learn how to browse models

- Choose a model and use it in the system

This lesson will teach you how to work with Models, and we’re not talking about supermodels like Naomi Campbell. In lesson 1, we used the model called SDXL. A model is simply a type of computer file used in AI applications. This (oversimplification), is enough to understand what is meant by “downloading a model”, or “choosing a model”. There is more information about models at the end of this tutorial.

Open your WebUI and let’s use a model:

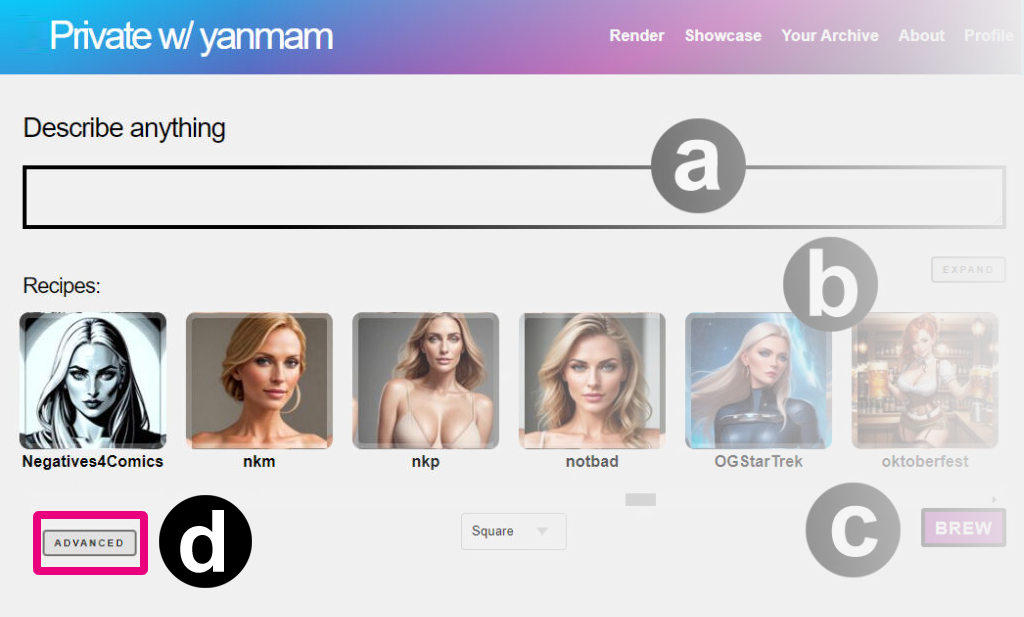

Step 1: Click on Advanced Mode (D) to see all models

Tip: Do you want to make Advanced your default?

Edit the URL of your creation page to https://whatever.graydient.ai/dashboard/ and you’ll find a checkbox that says “turn off brew”. Hit save at the bottom of the page and you’ll always get advanced by default.

Step 2: Copy this prompt

It’s good practice to describe who, what, how, and where, in this order. For example:

A portrait of a beautiful 21 year old Swedish woman drinking a beer next to the river in Amsterdam

The models menu appears in the advanced section of our software, as shown in Figure D below.

Step 3: Click the concepts button and add Photon

Find <photon> in the models selector by clicking the button in Figure (D) above.

Photon is a full model, similar to SDXL. It is a fine-tuned model focused on realistic images, greatly improving on the original Stable Diffusion 1.5. Getting to know a handful of models, and their strengths, will significantly improve your ability as an AI creator. It’s like knowing what tool to select from your toolbox.

Tip:When you already know the name of a model, you don’t have to select it from the visual menu. You can just type out <photon> in the positive prompt and write it in Figure (A) without having to pop up this visual menu.

Step 3: Click Render

And you’re done. Please wait a few seconds for your results to appear, and you’re done! You’ve just selected 1 model of the thousands of possibilities in the system.

Learn how to use Embeddings (Textual Inversions) to take your art to the next level.

Prompting tips:

- One base model per image. You can add many models to an image, but the last mentioned “full model”, also called a checkpoint or base model, is the one that will win.

- SDXL models are not compatible with other Stable Diffusion models. As they say in the movies, never mix the streams. At the moment, the most widely used version of Stable Diffusion is 1.5 due to it’s massive year-old ecosystem. We support both as well as Stable Diffusion 2.1, which can be triggered with <sdv21>. The software will warn you if you’ve mixed up the Stable Diffusion families, it simply won’t render until there is alignment.

Glossary

Models are just files, very often large files. Models, (also known as checkpoints, loras, inversions, or concepts) are a blanket term for “AI Models”, meaning there is a computer somewhere with a literal file (the model is a file) that can produces a certain affect.

In lesson 1, a model called Stable Diffusion XL (SDXL) had already ingested literally millions of photos of cats. That was the product of a very costly AI training exercise by the creators of Stable Diffusion.Models are the end-products of many hours of rigorous training that a computer or group of computers perform to understand a pattern.

A model is different from a database, because models store fuzzy patterns rather than specific data. This idea of “training” a model refers to ingesting many photos and looking for similar patterns. That’s why the AI knows what a cat looks like.

Checkpoint Models – Checkpoints are also known as “full models” or “base models”. They are often 2-10GB files. A checkpoint has the most impact on the outcome of an image, and there isn’t a way to reduce the “weight” or influence of a checkpoint. If you select <photon> you are getting 100% of what Photon can do. These contain the overall art style of a composition. SDXL is also an example of a checkpoint. When no checkpoint is specified, your WebUI may default to the most basic version, good old Stable Diffusion 1.5.

Unless you’re researching something retro, you probably don’t want this, so always remember to pick the right model for the job before prompting.

At the time of this update, we are hosting over 3000 models, which weighs in at a hefty 2 terabytes. For most people, this would be an impossibility to store locally on their smartphones or their computers, which is one of the primary reasons we created our service. For the AI enthusiast who wants all of this power on the go, we’re here for you.

Back to Web tutorials | Telegram tutorials